These days I am working on building a next generation mobile banking platform. One of the solutions that I was designing this week was around how to handle configuration masters in Microservices. I am not talking about Microservices configuration properties here. I have not seen much written about this in the context of Microservices . So, I thought let me document the solution that I am going forward with. But, before we do that let’s define what these are configuration masters.

In my terminology configuration masters are those entities of the system that are static yet configurable in nature. Examples of these include IFSC codes for banks, error messages, bank and their icons, account types, status types, etc. In a reasonably big application like mobile banking there will be anywhere between 50-100 configuration master entities. These configuration master entities have three characteristics:

- They don’t change often. This means they can be cached

- They don’t change often but you still want the flexibility to update existing items or add new items if required. Typically, they are modified either using database scripts or by exposing APIs that some form of admin portal(used by IT operations people) uses to add new entries or modify existing entry

- The number of rows per configuration master entity is not more than 1000. This make them suitable for local in-memory caching

As part of the solution design I wanted to come up with the solution that satisfy following requirements:

- It should be dead simple to add a new configuration master. There should be minimal code changes required

- A single unified loader that can load any kind of configuration master

- Configuration master data should be available in the service main memory so that we don’t add latency to requests served by service

- Microservice should work with old data in case new data is unavailable. It will be eventually consistent

- Our Microservice should have a simple key value interface that it can use to get value for a key

- It would be possible for a Microservice to specify a different key generation strategy for a master in case the default key generation strategy does not work

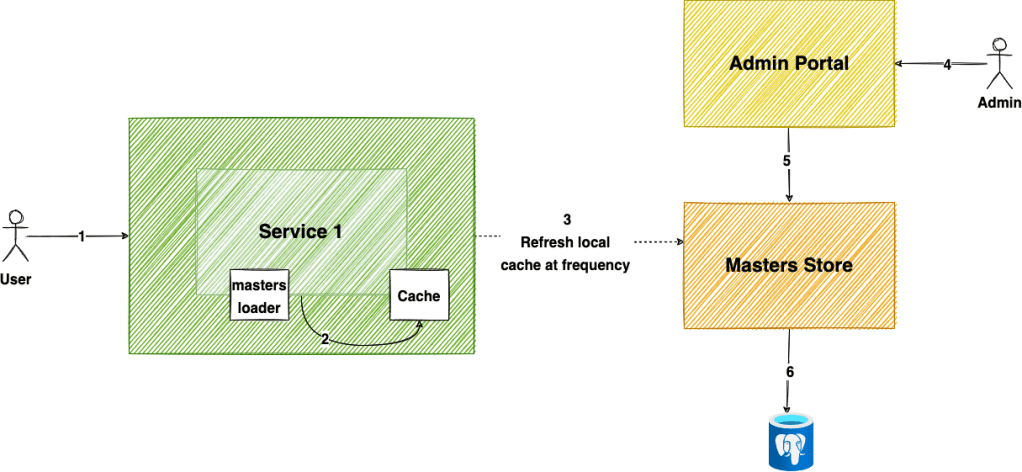

At a high level solution design looks like as shown below.

Following are the main components in the solution design shown above:

- Service 1: It is the Microservice that needs the master information. Services are implemented using Spring Boot

- masters-loader: It is a reusable shared library that implements how to fetch masters from a centeralized masters store. It also has schedulers that are invoked based on the configuration defined in the

Service 1 - Cache: It is the master specific in-memory cache. It is implemented in masters-loader shared library

- Masters Store: It is Strapi headless CMS that gives us an easy to use GUI to create masters and expose their REST APIs. It is backed by a Postgres database. We can also add Redis cache in front of our Postgres for better read performance.

- Admin Portal: It is Strapi’s headless CMS

Now that we understand the main components let me explain how it works.

Let’s first cover the request path

- When a request is made to the

Service 1then the service uses the masters-loader library KV interface to fetch the value for a specific key from the in-memory cache. The value could be a String or an Object. The interface doesn’t accept keys as String. Instead, it accepts a KeyBuilder that builds the actual String key. This way we can support different key types. - During the request we don’t have to hit the masters store. We expect data to be in the cache

Now, we will cover how master data is cached

- Each service specifies which masters it is interested in using a set of configuration properties as shown below.

## Masters loader config

service.masters.master1.enabled=true

service.masters.master1.frequency=1m

service.masters.master1.baseApiUri=https://masters-store/api/master1

service.masters.master2.enabled=true

service.masters.master2.frequency=6h

service.masters.master2.baseApiUri=https://masters-store/api/master2

In the configuration shown above our service needs two masters – master1 and master2. The master1 is refreshed every minute whereas master2 is refreshed every 6 hours.

- masters-loader shared library registers a listener for

org.springframework.boot.context.event.ApplicationReadyEvent. This event is published when service boots up. - The listener using the configuration mentioned in 1st point registers periodic tasks for each master. Only masters that are enabled are available. This is made possible using Spring’s

@ConditionalOnPropertyannotation as shown below.

@ConditionalOnProperty(name = "service.masters.master1.enabled", havingValue = "true")

public class Master1Loader {

}

- The periodic tasks does the job of making HTTP call to the centeralized masters store and update the cache. In the first implementation I have kept it dumb and I fetch all the master data for specific entity and update the cache.

To add a new master involves specifying the API path and how we have to create keys and their corresponding values. For some masters we want key value lookup and for others we want the complete collection for a key. We are able to do this by writing minimal coding as this is generic in nature.

There are a couple of interesting properties of this solution:

- We can pretty much do back of the envelope calculation on how many requests will be made to our centeralized masters store and accordingly provision resources. This does not depend on the number of requests received by our system. We need to know the frequency, number of services, and their instance count. We can use that to calculate the number of requests our masters store needs to handle. This design makes use of the constant work pattern. Constant work patterns have three key properties:

- One, they don’t scale up or slow down with load or stress.

- Two, they don’t have modes, which means they do the same operations in all conditions.

- Three, if they have any variation, it’s to do less work in times of stress so they can perform better when you need them most.

- Each master is isolated from the other. We can tune their refresh frequency depending on how frequent their data changes

- It is a simple design. You don’t need any complexity of message brokers like Kafka or managed brokers like Kinesis

When designing a system using schedulers it is important that you add jitter to each periodic task so that all are not invoked at the same time. This will ensure you don’t overload your downstream system. We added jitter in both the initial delay and their frequency.

When you build a system that uses scheduled polling there is always some extra work that your system does. I think it is a tradeoff that you make for simpler design. Until it becomes a real issue I think it is fine. We can always add a cache in front of Postrges DB to avoid it becoming overloaded.

One more solution that I am thinking of is to add an /events endpoint to Masters Store. The /events endpoint that returns all creates, updates, and deletes. Our periodic task can poll this endpoint and get all the data for a master. The masters store can maintain this in Redis so we don’t have to hit the db. When new entries are added, or existing entries modified, or deleted we add the event to master specific sorted set. We return this sorted set from our events endpoint. We can eventually get away from polling altogether by supporting long polling or web sockets in our masters store.

Discover more from Shekhar Gulati

Subscribe to get the latest posts sent to your email.

That is a great idea Shekhar. Strapi CMS can be indeed leveraged as a static data store here as you mentioned.

Can we think of masters store as a CDN ? We can refresh cache inside service 1 on cache timeout, maxage. Can also have a webhook in strapi to invalidate caches at service1 level manually in case there is a major change or if force invalidation is needed.

periodic referesh event will be triggered anyways by cache when timeout happens right? So do we need scheduled invalidation is question

from /events endpoint story it seems you are talking more in terms of change data capture.

If using strapi middleware cache(https://strapi.io/blog/caching-in-strapi-strapi-middleware-cache) can also add etag to support version based cache invalidations.

Additionally The cache is automatically busted everytime a PUT, PATCH, POST, or DELETE request comes in so not sure about /events endpoint.

Additionally strapi events can be leveraged for Create update deletes and publish/unpublish events.

https://docs.strapi.io/developer-docs/latest/development/backend-customization/webhooks.html#available-events

Not sure if my previous reply was posted so posting again.

Can we consider Strapi CMS as a content solution, for which content will be hosted on a dedicated CDN? If yes, can we think in following way:

For caching solution you mentioned, can timeout and maxAge work for the use case? Cache is anyway busted if there are updates on database parts

https://www.npmjs.com/package/strapi-middleware-cache

This middleware caches incoming GET requests on the strapi API, based on query params and model ID. The cache is automatically busted everytime a PUT, PATCH, POST, or DELETE request comes in.

For /events part it seems you are talking about CDC as we talk for databases or any info change.

For /events part you may leverage https://docs.strapi.io/developer-docs/latest/development/backend-customization/webhooks.html#available-events. Here you can also have an invalidate rest endpoint in your application that will be called when force invalidation of complete cache is required.

Thanks Swapnil for your response. We are using Strapi v4. Cache plugin[1] you mentioned is still under development for v4. I will be using it as soon as it is ready.

I am thinking of using CDN in front of Strapi where content in Strapi is user facing. As you might have understood, there are two use cases I am trying to achieve with Strapi 1) User facing semi-static content(labels, images) where frontend will talk to Strapi 2) Microservices masters configuration data. I think in second use case CDN will not be required.

1. https://github.com/patrixr/strapi-middleware-cache/tree/feature/strapi-v4